Your Code Comments Now Have a Second Audience

I was checking what tools were available to GitHub Copilot from the MCP server I had just built.

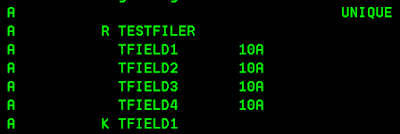

It showed a list. Each entry had a tool name at the top — the actual function name exported as an MCP tool. Next to it, in slightly smaller text, a description.

Wait, I hadn't written any descriptions. Not in the MCP configuration, not in any separate documentation file. I had written nothing that I thought of as a description.

Then I realized. That smaller text was my docstring. The comment I wrote at the top of the function — the one explaining what the procedure does, what arguments it takes, what it returns — was sitting right there, being shown to Copilot as the tool's documentation.

The AI was reading my code comments. Not inferring from them. Using them directly, word for word, to understand what the tool does and how to use it.

That was the moment I realized something had quietly shifted about what a code comment actually is.

The Old Job of a Docstring

In Python, a docstring is a comment written at the beginning of a function. It sits between triple quotes, explains what the function does, describes the arguments, notes the return value.

Developers write them for two reasons — to help the next developer who reads the code, and to satisfy the habit of documentation. Most of the time, honestly, they're written quickly and forgotten just as fast.

They live in the code. They don't go anywhere. They don't do anything at runtime. They're notes left behind for humans.

That's what they used to be.

What Changed with MCP

An MCP server exposes functions as tools that AI agents can connect to and use. When an agent connects to your MCP server, it needs to understand what each tool does — what it's for, when to call it, what to pass in.

In a REST API, a developer reads the documentation and writes code accordingly. The UI guides the user. There's always a human in the loop interpreting something.

With an MCP server, the AI agent is the one that needs to understand the tool. And it learns about the tool from — the docstring.

When I saw Copilot display my function's docstring as the tool description, I was seeing this directly. The MCP protocol takes the docstring and surfaces it to the agent as the tool's documentation. No separate documentation file. No API spec. Just the comment I wrote in the code.

The docstring had quietly acquired a second job — one it was always structurally capable of, but nobody had asked of it before.

What This Means for Developers

A careless docstring used to cost the next developer some confusion. They'd read the code itself to figure out what was missing.

A careless docstring on an MCP tool costs the end user. The AI agent reads that description, misunderstands what the tool does, calls it incorrectly, or doesn't call it at all when it should. The user gets a wrong answer or no answer — and has no idea the problem started with a comment someone wrote in twenty seconds.

The docstring is now user-facing. That's a different standard entirely.

A good MCP tool docstring needs to answer three things clearly:

- What does this tool do — not in developer terms, in plain terms. Not "queries the PRDDTA table" but "retrieves product details for a given product code."

- When should the agent use it — what question or request from a user should trigger this tool? The agent uses this to decide which tool to call.

- What does it need — arguments described clearly enough that the agent passes the right values without guessing.

When those three things are clear, the agent uses the tool correctly. When they're vague, the agent guesses — and guessing at scale, across every user interaction, compounds fast.

The Broader Shift

I've been writing code comments for years. They were always for the person who comes after me — the developer maintaining the code, the reviewer reading the PR, occasionally my future self six months later wondering what I was thinking.

Building this MCP server added someone new to that list.

The AI agent that reads my docstring has no context beyond what I wrote. It can't look at the rest of the code and infer. It can't ask me what I meant. It reads the description, forms an understanding, and acts on it — on behalf of a real user who expects a real answer.

That's a different kind of responsibility than writing for a developer who can always dig deeper.

It's also, when you think about it, exactly how MCP was designed to work. The documentation lives in the code itself. The developer writes it once. The agent uses it every time. No separate documentation to maintain, no spec to keep in sync.

The constraint is also the elegance. The docstring has to be good — because there's nothing else.

What's Next

This post covers what I discovered about how AI agents read MCP documentation. The previous post covers how to connect an on-prem MCP server to Claude Desktop when the obvious options don't work — if you're building something similar, start there.

Next — the video version of both, together. The connection problem, the solution, and this docstring moment in one place.

Comments

Post a Comment