How Spam Filters Work: Coding Naive Bayes from Scratch

Building a Spam Detector from Scratch: The Naive Bayes Classifier

Three billion emails are sent every hour. And nearly half of them are spam.

"Congratulations! You won free money. Click here now."

If we look at this email, we immediately realize it is spam. But how does the machine realize it?

It's an engineering problem. An email has thousands of words, and each word changes the probability of it being spam or not spam. Checking how these words relate to each other is computationally impossible.

We need to make a naive assumption to solve it. This assumption leads us to one of the most effective classifiers: The Naive Bayes Classifier.

The "Naive" Assumption

The Naive Bayes classifier assumes that all features are conditionally independent given the class.

What does this mean in simple terms? It assumes that the presence of one word does not affect the probability of another word appearing, as long as we know the class.

For example, in a spam email, the algorithm assumes that the word "free" appearing is independent of the word "money" appearing, given that we know it's spam.

But Wait... That's Not True!

You're right! Words like "free" and "money" often appear together in spam emails. That's why it's called naive.

But here's the remarkable part: Despite this assumption being wrong, the classifier works incredibly well in practice. The naive assumption simplifies computation dramatically—instead of calculating dependencies between all possible word combinations, we just multiply individual probabilities.

How Bayes Theorem Applies to Classification

For classification, we want to find the probability of a class given the features. In our case: What is the probability that an email is spam, given the words it contains?

P(Spam|Words) = [P(Words|Spam) × P(Spam)] / P(Words)

Let's break down the terminology:

- P(Spam|Words) (Posterior): The probability that the email is spam given the words.

- P(Words|Spam) (Likelihood): The probability of seeing these words in a spam email.

- P(Spam) (Prior): How common spam emails are overall.

- P(Words) (Evidence): How common these words are in general.

The Naive Simplification

With the naive assumption, calculating P(Words|Spam) becomes much simpler. Instead of calculating the probability of all words together, we multiply the probabilities of each individual word:

P(Words|Spam) = P(word₁|Spam) × P(word₂|Spam) × P(word₃|Spam) × ...

To classify a new email, we calculate this probability for both spam and not spam. Whichever probability is higher—that's our prediction!

A Simple Example: The Zero-Probability Problem

Let's walk through a simple example to see exactly how this works—and discover a critical problem.

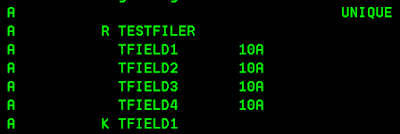

We start with just four emails. Two are spam, two are not spam:

- Email 1: "free money win" → SPAM

- Email 2: "meeting tomorrow project" → NOT SPAM

- Email 3: "free click win prize" → SPAM

- Email 4: "report meeting deadline" → NOT SPAM

Step 1: Calculate Prior Probabilities

P(Spam) = 2/4 = 0.5 and P(Not Spam) = 2/4 = 0.5

Step 2: Count the Words

We count how frequently each word appears in spam vs. not spam emails. For example, "free" appears 2 times in spam emails, "meeting" appears 2 times in not spam emails.

The Problem: A New Word

Now let's try to classify: "urgent free money"

We notice the word "urgent" never appeared in our training data. So:

- P(urgent|spam) = 0

- P(urgent|not spam) = 0

When we calculate: P(spam|email) = P(spam) × P(urgent|spam) × P(free|spam) × P(money|spam)

Since P(urgent|spam) = 0, the entire product becomes zero. The same happens for not spam.

Result: Zero probability for both classes. We cannot classify!

The Solution: Laplace Smoothing

We add 1 to all word counts. This is called Laplace smoothing or add-one smoothing:

P(word|class) = (count of word in class + 1) / (total words in class + vocabulary size)

Now no word has zero probability. Even "urgent" gets a small non-zero probability, and we can classify successfully!

This ensures that a single unseen word doesn't break the entire classification.

Building a Full Spam Detector

Now let's build a complete spam detector with a larger dataset of 30 emails (15 spam, 15 not spam).

Step 1: Preprocess the Text

First, we clean the data. For each email:

- Convert everything to lowercase

- Remove punctuation and special characters

- Split into individual words (tokens)

def preprocess_text(text):

text = text.lower()

text = re.sub(r'[^a-z\s]', '', text)

words = text.split()

return words

Step 2: Calculate Prior Probabilities

From our training data:

- P(Spam): 15/30 = 0.5

- P(Not Spam): 15/30 = 0.5

Step 3: Calculate Word Probabilities with Laplace Smoothing

For each word in our vocabulary, we calculate how often it appears in spam vs. not spam emails, applying Laplace smoothing:

For example:

- The word "free" might appear frequently in spam (P(free|spam) = 0.08) but rarely in not spam (P(free|not spam) = 0.01)

- The word "meeting" has high probability in not spam, low probability in spam

Step 4: Use Log Probabilities

When we multiply many probabilities, the product becomes extremely small—this causes numerical underflow. By taking the logarithm, we convert multiplication into addition:

log(a × b × c) = log(a) + log(b) + log(c)

This makes computation numerically stable while preserving the relative ordering of probabilities.

Step 5: Classification

When a new email arrives, we:

- Extract its words: "Congratulations! You won free money. Click here now."

- Calculate log probabilities for spam and not spam

- Compare: If P(spam|email) > P(not spam|email), classify as spam

# Calculate log probabilities

log_prob_spam = np.log(prior_spam)

log_prob_not_spam = np.log(prior_not_spam)

for word in email_words:

log_prob_spam += np.log(P_word_given_spam[word])

log_prob_not_spam += np.log(P_word_given_not_spam[word])

# Classify

prediction = 1 if log_prob_spam > log_prob_not_spam else 0

A Classification Example

Let's classify: "free money click"

We start with the prior probabilities (in log space):

- log P(spam) = log(0.5) = -0.69

- log P(not spam) = log(0.5) = -0.69

For each word, we add the log probability:

- "free": High in spam (0.08), low in not spam (0.01) → Strong spam indicator

- "money": High in spam (0.06), low in not spam (0.005) → Strong spam indicator

- "click": High in spam (0.07), low in not spam (0.02) → Strong spam indicator

After adding all log probabilities:

- log P(spam|email) = -4.1

- log P(not spam|email) = -8.5

Since -4.1 > -8.5, we predict SPAM ✓

Why Log Probabilities?

When we multiply many small probabilities (like 0.001 × 0.002 × 0.003...), the result becomes too small for computers to handle accurately—this is called numerical underflow.

By taking the logarithm, we convert multiplication into addition:

log(a × b × c) = log(a) + log(b) + log(c)

This makes computation numerically stable while preserving the relative ordering of probabilities. More words with low probabilities won't cause the product to vanish—we just keep adding log values.

Visualizing Important Words

In our Python implementation, we visualize which words are most indicative of each class by calculating log probability ratios:

- Top Spam Words: free, click, win, urgent, money, cash, offer

- Top Not Spam Words: meeting, tomorrow, please, report, project, team, thanks

This gives us insight into what the classifier has learned and helps debug misclassifications.

Why Does This Work Despite Being "Naive"?

Even though words are not truly independent, the classifier still works well because it's comparing two options. It doesn't need to get the exact numbers right—it just needs to rank spam higher than not spam for spam emails, and not spam higher than spam for legitimate emails.

- Relative Comparison: It doesn't need exact probabilities—just needs correct relative ranking

- Error Cancellation: The errors from the independence assumption often cancel out when comparing classes

- Simplification Benefits: We can train on less data and make predictions faster

The naive assumption is wrong, but it's useful. And in machine learning, useful often beats correct.

Strengths of Naive Bayes

- ✓ Simple and Fast: Easy to implement and train, even with large datasets

- ✓ Works with Small Data: Unlike deep learning models that need millions of examples, Naive Bayes works with just hundreds

- ✓ Handles High Dimensions: With thousands of words, most classifiers struggle. Naive Bayes thrives

- ✓ Provides Probabilities: Gives confidence scores, not just binary predictions

- ✓ Robust to Irrelevant Features: If some words don't help distinguish classes, they don't hurt the classifier

Limitations

- ✗ Independence Assumption: Cannot learn how words relate—won't learn that "free money" together is spammier than just "free" or just "money"

- ✗ Needs Smoothing: Without Laplace smoothing, a single unseen word breaks the classifier

- ✗ Probability Calibration: The relative ranking is good, but actual probability values might be off

Real-World Applications

Naive Bayes is used beyond spam detection:

- Sentiment Analysis: Is this movie review positive or negative?

- Document Classification: Which category does this news article belong to?

- Medical Diagnosis: Given these symptoms, what disease is most likely?

- Real-time Prediction Systems: Because it's so fast, it's used in production where speed matters

Validation: Comparing with Scikit-learn

In our Python notebook, we validate our from-scratch implementation by comparing it with scikit-learn's MultinomialNB classifier. Both produce the same results, confirming our implementation is correct!

from sklearn.naive_bayes import MultinomialNB

# Train scikit-learn model

sklearn_nb = MultinomialNB(alpha=1.0) # alpha=1.0 for Laplace smoothing

sklearn_nb.fit(X_train, y_train)

# Our accuracy: 100%

# Scikit-learn accuracy: 100% ✓

The Beauty of Simplicity

The beauty of Naive Bayes is in its simplicity. It takes a complex problem (text classification with thousands of features), makes a bold simplifying assumption (independence), and still delivers excellent results.

It's a perfect example of how sometimes, the simplest approach is the best approach.

But We're Just Getting Started...

Naive Bayes treats every word equally. But think about it—the word "urgent" carries far more information than the word "the".

How do we measure the importance of words mathematically? How do we quantify information itself?

The answer: Entropy, the math of surprise. And it changes everything.

That's what we'll explore next in our journey through Information Theory.

What We Built in the Python Notebook

In the companion notebook, we implement the entire pipeline from scratch:

- Simple 4-email toy example demonstrating the zero-probability problem

- Laplace smoothing solution

- Full 30-email spam detector implementation

- Text preprocessing and tokenization

- Prior probability calculation

- Word likelihood calculation with smoothing

- Log probability implementation to prevent underflow

- Classification function

- Word importance visualization

- Comparison with scikit-learn validation

We explore the mathematical foundation in detail, showing how Bayes theorem transforms into the classification rule, why log probabilities prevent numerical underflow, and why Laplace smoothing is necessary.

Get the Code

Want to build your own spam detector from scratch? Check out the Python Notebook with complete implementation and visualizations.

This post is part of the "Probability for Machine Learning" series. For the previous part, check out: Why MLE Fails: Decoding Bayesian Inference.

great

ReplyDeleteBuilding a Spam Detector from Scratch using the Naive Bayes Classifier is a classic and effective approach in machine learning. The method is based on Bayes' Theorem, which calculates the probability of a message being spam given its features. In this project, emails or messages are first preprocessed by cleaning text, removing stopwords, and converting words into numerical representations. The model assumes feature independence, meaning each word contributes independently to the probability calculation. Training involves calculating prior probabilities of spam and non-spam classes and likelihood probabilities of words appearing in each class. Once trained, the classifier can predict whether a new message is spam or not based on learned probabilities. Variants like Multinomial and Bernoulli Naive Bayes are commonly used for text classification tasks. Despite its simplicity, Naive Bayes performs surprisingly well for spam detection problems. It is computationally efficient and works well even with relatively small datasets. Overall, this project demonstrates fundamental concepts of probabilistic learning and text classification in a practical way.

ReplyDeleteText Classification Projects

Thanks for such a detailed summary—really appreciate you taking the time to break it down so clearly!

DeleteYou’ve nicely captured the core idea behind Naive Bayes and its practicality for spam detection.

I especially agree with your point about its effectiveness even with smaller datasets—that’s one of the reasons it’s still widely used despite its simplicity.

I’m planning to explore more advanced approaches and also compare them with Naive Bayes in future posts. Would love to hear your thoughts on those as well!